Table of Contents

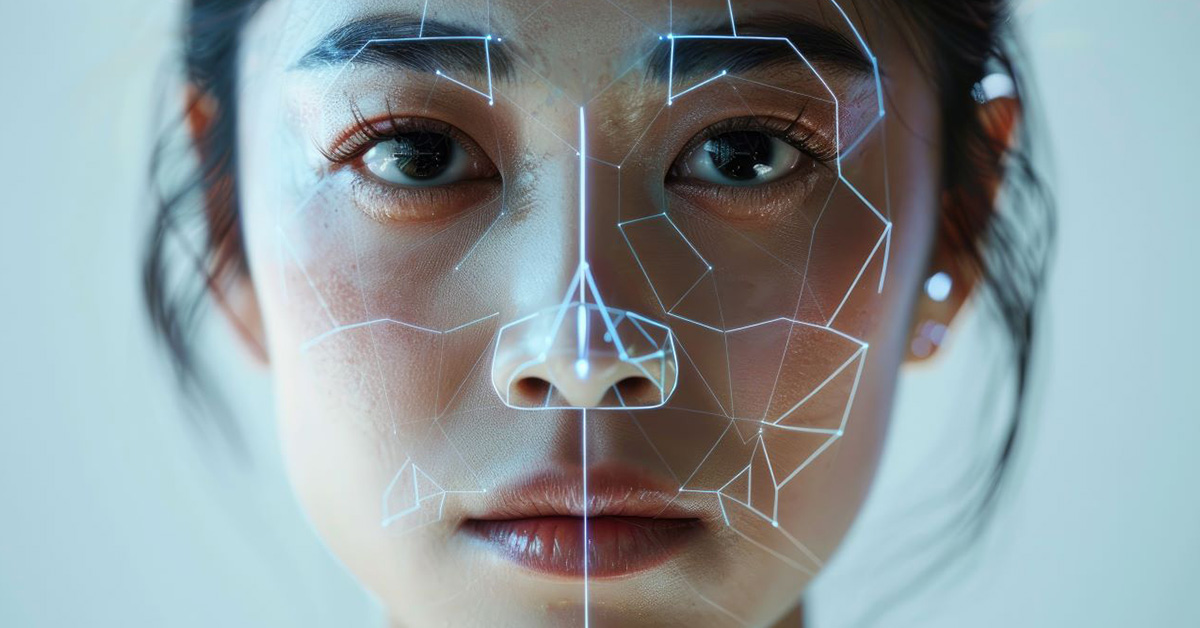

In 2026, the phrase “seeing is believing” has officially retired. We live in an era where AI can clone your voice from a 30-second clip and swap your face into a high-definition video in minutes. Whether it’s a “bank manager” video call scam or unauthorized synthetic likenesses, the threat to your digital identity is real.

From the spiritual heart of Vrindavan to the global digital stage, protecting your “Digital Likeness” is no longer optional it’s a fundamental part of modern digital hygiene.

What Exactly is Your “Digital Likeness”?

Your digital likeness is the sum of your biometric identity: your voice, face, mannerisms, and even your unique gait. In legal terms, these are increasingly protected as “digital replicas”. In the wrong hands, these “replicas” are weaponized for fraud, social engineering, and reputational harm.

5 Practical Steps to Protect Yourself Today

1. Poison the AI Scrapers

AI models are trained on public data. You can proactively protect your photos using “cloaking” or “data poisoning” tools.

- The Hack: Use tools like Glaze or Nightshade before uploading images. These add invisible “noise” to pixels that confuses AI training models without changing how the photo looks to humans.

- The Result: Your face becomes “undigestible” for unauthorized AI training.

2. Audit Your “High-Definition” Footprint

Deepfakes require high-quality source material.

- The Action: Avoid posting ultra-HD close-ups of your face or long, clear videos with consistent lighting.

- The Fix: Lower the resolution of public social media uploads or use filters that subtly alter facial geometry.

3. Implement a “Family Safe-Word”

Voice cloning is the fastest-growing deepfake threat in 2026.

- The Protocol: Establish a secret phrase or “safe-word” with your family and team members.

- The Use-Case: If you receive an urgent video or voice call from a loved one asking for money or sensitive data, ask for the safe-word. If they can’t provide it, it’s a deepfake no matter how real they look.

4. Leverage AI Detection Tools

Fight AI with AI. Several platforms now offer real-time screening for individuals and businesses.

- Top 2026 Tools:

- Reality Defender: Multimodal screening for video, audio, and images.

- Pindrop Pulse: Specifically optimized for detecting audio/voice cloning.

- Intel FakeCatcher: Uses biological signals (like blood flow in the face) to detect manipulation.

5. Exercise Your “Right to Erasure”

Under new 2026 frameworks like India’s DPDP Act and updated IT Rules, citizens have a “Zero-Tolerance” shield against deepfakes.

- The Law: Platforms are now legally bound by the 3-Hour Rule in India they must remove court-ordered or law-enforcement-directed deepfakes within 180 minutes.

- The Action: If you find a deepfake of yourself, report it immediately. Platforms now face significant fines for failing to “unlearn” or delete unauthorized synthetic likenesses.

How to Spot a Deepfake (Manually)

While AI is getting better, look for these “glitches in the matrix”:

- Unnatural Blinking: Many deepfakes still struggle with a natural blinking rhythm.

- The Shadow Mismatch: Check if the shadows on the face match the lighting of the background.

- Audio Desync: Watch the lips closely; deepfake audio often has tiny pacing inconsistencies compared to lip movement.

Conclusion: Sovereignty in a Synthetic World

Your digital likeness is your most valuable asset in 2026. By adopting Digital Minimalism, tightening your privacy settings, and using AI protection tools, you reclaim control over your identity.

Don’t wait for a breach. Secure your “Silver Scoop” of digital privacy today.

FAQs’

1. Can someone really clone my voice from a short clip?

Yes. In 2026, AI can create a convincing voice clone from as little as 30 seconds of clear audio found on social media or in public recordings.

2. What should I do if I find a deepfake of myself?

Report it to the platform immediately. Under 2026 IT Rules, platforms must act within a strict 3-hour window for high-risk content like impersonation or non-consensual imagery.

3. Are there free tools to protect my photos from AI?

Yes. Tools like Glaze and Nightshade are available to the public and help prevent AI models from accurately “reading” and training on your personal images.

Have any thoughts?

Share your reaction or leave a quick response — we’d love to hear what you think!