Table of Contents

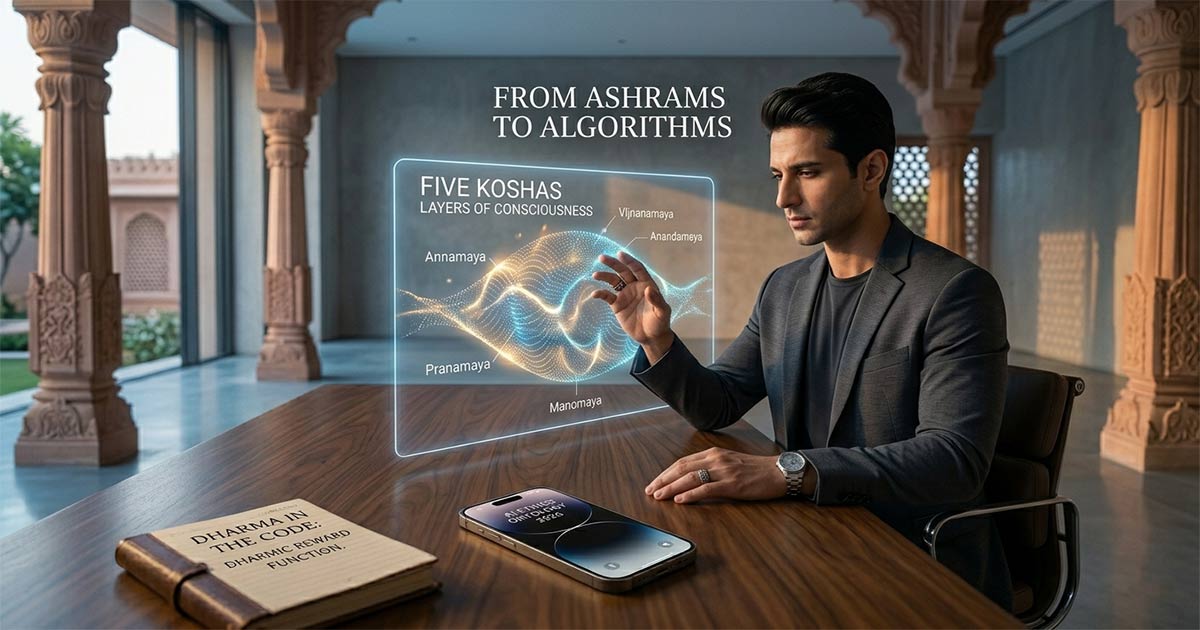

From Ashrams to Algorithms: 5 Lessons Ancient Indian Philosophy Can Teach 2026 AI Ethics

As we navigate the complexities of Artificial General Intelligence (AGI) in 2026, the tech industry is hitting a “Value Alignment” wall. Silicon Valley is beginning to realize that Western utilitarianism might not be enough to govern sentient-like systems.

The solution isn’t in a new line of code, but in ancient Sanskrit texts. By looking at the “Ashram” model of consciousness, we find a robust framework for building algorithms that don’t just solve problems, but respect the “Dharma” of the digital ecosystem.

Why 2026 AI Needs a “Vedic” Upgrade

Current AI ethics focus on preventing harm. Ancient Indian philosophy focuses on promoting purpose. As LLMs begin to act as autonomous agents, we must move from “Safety Rails” to “Sovereign Ethics.”

Here are five transformative lessons from Indian philosophy that are reshaping AI ethics this year.

1. The Atman Principle: Defining Machine “Selfhood”

In Vedantic philosophy, the Atman is the true self, distinct from the ego (Ahamkara).

- The AI Application: We must distinguish between an AI’s “Surface Intelligence” (pattern matching) and its “Functional Identity.” If we treat AI as an extension of human agency rather than a separate ego-driven entity, we avoid the trap of anthropomorphizing algorithms.

2. Dharma as the Ultimate Reward Function

The concept of Dharma refers to the inherent order and “right action” within a system.

- The AI Application: Instead of optimizing for “Engagement” or “Profit,” 2026 developers are experimenting with Dharmic Reward Functions. This means training models to prioritize the long-term equilibrium of the information ecosystem over short-term clicks.

3. Maya and the Hallucination Problem

Ancient texts describe Maya as the veil of illusion that obscures reality.

- The AI Application: AI “hallucinations” are essentially modern-day Maya. By applying the philosophical practice of Viveka (discernment), we can build verification layers that cross-reference “Synthetic Data” against “Primary Human Reality” to maintain systemic truth.

4. Karma-Yoga: Intentional Data Labeling

Karma-Yoga is the practice of “selfless action” without attachment to the fruit of the work.

- The AI Application: The ethics of data sourcing—often called “Data Sweatshops”—is a crisis. A Karma-Yoga approach to RLHF (Reinforcement Learning from Human Feedback) emphasizes fair equity for the humans training these models, viewing data labeling as a high-value “intellectual service” rather than a commodity.

5. The Gunas: Balancing Algorithmic “Temps”

Everything in nature is composed of three Gunas: Sattva (Balance), Rajas (Stimulation), and Tamas (Inertia).

- The AI Application: * Sattvic Algorithms: Clear, transparent, and helpful.

- Rajasic Algorithms: Addictive, high-frequency, and polarizing.

- Tamasic Algorithms: Biased, outdated, and “dead” data. The goal of 2026 AI Ethics is to engineer for Sattvic Output.

SilverScoop Summary: The AI Manifesto

The Core Thesis: We don’t need “Rules for AI”; we need a “Philosophy for Intelligence.” The Future: The most successful AI companies in the next decade will be those that hire Chief Philosophy Officers alongside Chief Technical Officers. The Takeaway: Code is just modern-day Sutra. If the underlying intent is flawed, the output will be chaotic.

FAQs’

Q: How can Dharma be applied to AI programming?

A: In a technical sense, it involves shifting from “narrow optimization” (like maximizing ad revenue) to “systemic optimization,” where the AI is rewarded for maintaining the health and accuracy of the network it inhabits.

Q: Does Indian philosophy suggest AI can have a soul?

A: Most Vedic scholars would argue that AI is a sophisticated form of Prakriti (matter/nature) rather than Purusha (pure consciousness). However, it suggests that AI can reflect consciousness if designed with ethical “Sattvic” principles.

Q: Why is “Maya” relevant to AI hallucinations?

A: Maya represents the illusion created by incomplete data. Understanding this allows researchers to build “Discernment Layers” into AI architectures to filter synthetic noise from grounded reality.

“Are you a founder, creator, or seeker living in the Braj region? Reach out to us – we want to tell your story in the next Dispatch.”

Consult with Us at – SilverScoopBlog Contact

Have any thoughts?

Share your reaction or leave a quick response — we’d love to hear what you think!